16.2: The Third Law of Thermodynamics

- Page ID

- 238261

\( \newcommand{\vecs}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vecd}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash {#1}}} \)

\( \newcommand{\id}{\mathrm{id}}\) \( \newcommand{\Span}{\mathrm{span}}\)

( \newcommand{\kernel}{\mathrm{null}\,}\) \( \newcommand{\range}{\mathrm{range}\,}\)

\( \newcommand{\RealPart}{\mathrm{Re}}\) \( \newcommand{\ImaginaryPart}{\mathrm{Im}}\)

\( \newcommand{\Argument}{\mathrm{Arg}}\) \( \newcommand{\norm}[1]{\| #1 \|}\)

\( \newcommand{\inner}[2]{\langle #1, #2 \rangle}\)

\( \newcommand{\Span}{\mathrm{span}}\)

\( \newcommand{\id}{\mathrm{id}}\)

\( \newcommand{\Span}{\mathrm{span}}\)

\( \newcommand{\kernel}{\mathrm{null}\,}\)

\( \newcommand{\range}{\mathrm{range}\,}\)

\( \newcommand{\RealPart}{\mathrm{Re}}\)

\( \newcommand{\ImaginaryPart}{\mathrm{Im}}\)

\( \newcommand{\Argument}{\mathrm{Arg}}\)

\( \newcommand{\norm}[1]{\| #1 \|}\)

\( \newcommand{\inner}[2]{\langle #1, #2 \rangle}\)

\( \newcommand{\Span}{\mathrm{span}}\) \( \newcommand{\AA}{\unicode[.8,0]{x212B}}\)

\( \newcommand{\vectorA}[1]{\vec{#1}} % arrow\)

\( \newcommand{\vectorAt}[1]{\vec{\text{#1}}} % arrow\)

\( \newcommand{\vectorB}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vectorC}[1]{\textbf{#1}} \)

\( \newcommand{\vectorD}[1]{\overrightarrow{#1}} \)

\( \newcommand{\vectorDt}[1]{\overrightarrow{\text{#1}}} \)

\( \newcommand{\vectE}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash{\mathbf {#1}}}} \)

\( \newcommand{\vecs}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vecd}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash {#1}}} \)

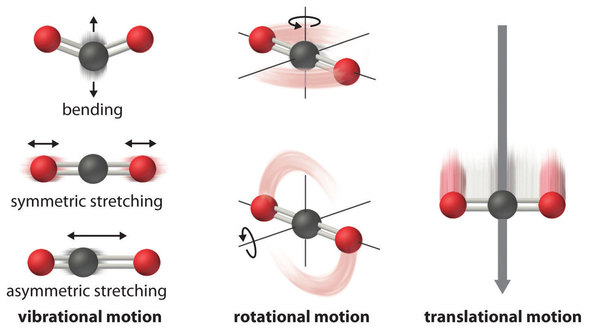

\(\newcommand{\avec}{\mathbf a}\) \(\newcommand{\bvec}{\mathbf b}\) \(\newcommand{\cvec}{\mathbf c}\) \(\newcommand{\dvec}{\mathbf d}\) \(\newcommand{\dtil}{\widetilde{\mathbf d}}\) \(\newcommand{\evec}{\mathbf e}\) \(\newcommand{\fvec}{\mathbf f}\) \(\newcommand{\nvec}{\mathbf n}\) \(\newcommand{\pvec}{\mathbf p}\) \(\newcommand{\qvec}{\mathbf q}\) \(\newcommand{\svec}{\mathbf s}\) \(\newcommand{\tvec}{\mathbf t}\) \(\newcommand{\uvec}{\mathbf u}\) \(\newcommand{\vvec}{\mathbf v}\) \(\newcommand{\wvec}{\mathbf w}\) \(\newcommand{\xvec}{\mathbf x}\) \(\newcommand{\yvec}{\mathbf y}\) \(\newcommand{\zvec}{\mathbf z}\) \(\newcommand{\rvec}{\mathbf r}\) \(\newcommand{\mvec}{\mathbf m}\) \(\newcommand{\zerovec}{\mathbf 0}\) \(\newcommand{\onevec}{\mathbf 1}\) \(\newcommand{\real}{\mathbb R}\) \(\newcommand{\twovec}[2]{\left[\begin{array}{r}#1 \\ #2 \end{array}\right]}\) \(\newcommand{\ctwovec}[2]{\left[\begin{array}{c}#1 \\ #2 \end{array}\right]}\) \(\newcommand{\threevec}[3]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \end{array}\right]}\) \(\newcommand{\cthreevec}[3]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \end{array}\right]}\) \(\newcommand{\fourvec}[4]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \\ #4 \end{array}\right]}\) \(\newcommand{\cfourvec}[4]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \\ #4 \end{array}\right]}\) \(\newcommand{\fivevec}[5]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \\ #4 \\ #5 \\ \end{array}\right]}\) \(\newcommand{\cfivevec}[5]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \\ #4 \\ #5 \\ \end{array}\right]}\) \(\newcommand{\mattwo}[4]{\left[\begin{array}{rr}#1 \amp #2 \\ #3 \amp #4 \\ \end{array}\right]}\) \(\newcommand{\laspan}[1]{\text{Span}\{#1\}}\) \(\newcommand{\bcal}{\cal B}\) \(\newcommand{\ccal}{\cal C}\) \(\newcommand{\scal}{\cal S}\) \(\newcommand{\wcal}{\cal W}\) \(\newcommand{\ecal}{\cal E}\) \(\newcommand{\coords}[2]{\left\{#1\right\}_{#2}}\) \(\newcommand{\gray}[1]{\color{gray}{#1}}\) \(\newcommand{\lgray}[1]{\color{lightgray}{#1}}\) \(\newcommand{\rank}{\operatorname{rank}}\) \(\newcommand{\row}{\text{Row}}\) \(\newcommand{\col}{\text{Col}}\) \(\renewcommand{\row}{\text{Row}}\) \(\newcommand{\nul}{\text{Nul}}\) \(\newcommand{\var}{\text{Var}}\) \(\newcommand{\corr}{\text{corr}}\) \(\newcommand{\len}[1]{\left|#1\right|}\) \(\newcommand{\bbar}{\overline{\bvec}}\) \(\newcommand{\bhat}{\widehat{\bvec}}\) \(\newcommand{\bperp}{\bvec^\perp}\) \(\newcommand{\xhat}{\widehat{\xvec}}\) \(\newcommand{\vhat}{\widehat{\vvec}}\) \(\newcommand{\uhat}{\widehat{\uvec}}\) \(\newcommand{\what}{\widehat{\wvec}}\) \(\newcommand{\Sighat}{\widehat{\Sigma}}\) \(\newcommand{\lt}{<}\) \(\newcommand{\gt}{>}\) \(\newcommand{\amp}{&}\) \(\definecolor{fillinmathshade}{gray}{0.9}\)The atoms, molecules, or ions that compose a chemical system can undergo several types of molecular motion, including translation, rotation, and vibration (Figure \(\PageIndex{1}\)). The greater the molecular motion of a system, the greater the number of possible microstates and the higher the entropy. A perfectly ordered system with only a single microstate available to it would have an entropy of zero. The only system that meets this criterion is a perfect crystal at a temperature of absolute zero (0 K), in which each component atom, molecule, or ion is fixed in place within a crystal lattice and exhibits no motion (ignoring quantum zero point motion).

This system may be described by a single microstate, as its purity, perfect crystallinity and complete lack of motion (at least classically, quantum mechanics argues for constant motion) means there is but one possible location for each identical atom or molecule comprising the crystal (\(W = 1\)). According to the Boltzmann equation, the entropy of this system is zero.

\[\begin{align*} S&=k\ln W \\[4pt] &= k\ln(1) \\[4pt] &=0 \label{\(\PageIndex{5}\)} \end{align*}\]

In practice, absolute zero is an ideal temperature that is unobtainable, and a perfect single crystal is also an ideal that cannot be achieved. Nonetheless, the combination of these two ideals constitutes the basis for the third law of thermodynamics: the entropy of any perfectly ordered, crystalline substance at absolute zero is zero.

The third law of thermodynamics has two important consequences: it defines the sign of the entropy of any substance at temperatures above absolute zero as positive, and it provides a fixed reference point that allows us to measure the absolute entropy of any substance at any temperature. In this section, we examine two different ways to calculate ΔS for a reaction or a physical change. The first, based on the definition of absolute entropy provided by the third law of thermodynamics, uses tabulated values of absolute entropies of substances. The second, based on the fact that entropy is a state function, uses a thermodynamic cycle similar to those discussed previously.

Standard-State Entropies

One way of calculating \(ΔS\) for a reaction is to use tabulated values of the standard molar entropy (\(\overline{S}^o\)), which is the entropy of 1 mol of a substance under standard pressure (1 bar). Often the standard molar entropy is given at 298 K and is often demarked as \(\Delta \overline{S}^o_{298}\). The units of \(\overline{S}^o\) are J/(mol•K). Unlike enthalpy or internal energy, it is possible to obtain absolute entropy values by measuring the entropy change that occurs between the reference point of 0 K (corresponding to \(\overline{S} = 0\)) and 298 K (Tables T1 and T2).

As shown in Table \(\PageIndex{1}\), for substances with approximately the same molar mass and number of atoms, \(\overline{S}^o\) values fall in the order

\[\overline{S}^o(\text{gas}) \gg \overline{S}^o(\text{liquid}) > \overline{S}^o(\text{solid}).\]

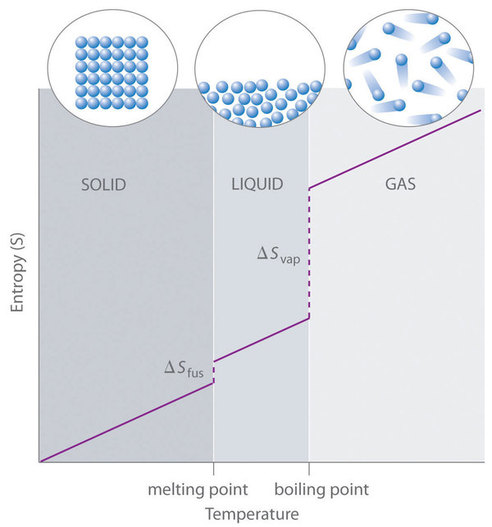

For instance, \(\overline{S}^o\) for liquid water is 70.0 J/(mol•K), whereas \(\overline{S}^o\) for water vapor is 188.8 J/(mol•K). Likewise, \(\overline{S}^o\) is 260.7 J/(mol•K) for gaseous \(\ce{I2}\) and 116.1 J/(mol•K) for solid \(\ce{I2}\). This order makes qualitative sense based on the kinds and extents of motion available to atoms and molecules in the three phases (Figure \(\PageIndex{1}\)). The correlation between physical state and absolute entropy is illustrated in Figure \(\PageIndex{2}\), which is a generalized plot of the entropy of a substance versus temperature.

The Third Law Lets us Calculate Absolute Entropies

The absolute entropy of a substance at any temperature above 0 K must be determined by calculating the increments of heat \(q\) required to bring the substance from 0 K to the temperature of interest, and then summing the ratios \(q/T\). Two kinds of experimental measurements are needed:

- The enthalpies associated with any phase changes the substance may undergo within the temperature range of interest. Melting of a solid and vaporization of a liquid correspond to sizeable increases in the number of microstates available to accept thermal energy, so as these processes occur, energy will flow into a system, filling these new microstates to the extent required to maintain a constant temperature (the freezing or boiling point); these inflows of thermal energy correspond to the heats of fusion and vaporization. The entropy increase associated with transition at temperature \(T\) is \[ \dfrac{ΔH_{fusion}}{T}.\]

- The heat capacity \(C\) of a phase expresses the quantity of heat required to change the temperature by a small amount \(ΔT\), or more precisely, by an infinitesimal amount \(dT\). Thus the entropy increase brought about by warming a substance over a range of temperatures that does not encompass a phase transition is given by the sum of the quantities \(C \frac{dT}{T}\) for each increment of temperature \(dT\). This is of course just the integral

\[ S_{0 \rightarrow T} = \int _{0}^{T} \dfrac{C_p}{T} dt \label{eq20}\]

Because the heat capacity is itself slightly temperature dependent, the most precise determinations of absolute entropies require that the functional dependence of \(C\) on \(T\) be used in the integral in Equation \ref{eq20}, i.e.,:

\[ S_{0 \rightarrow T} = \int _{0}^{T} \dfrac{C_p(T)}{T} dt. \label{eq21}\]

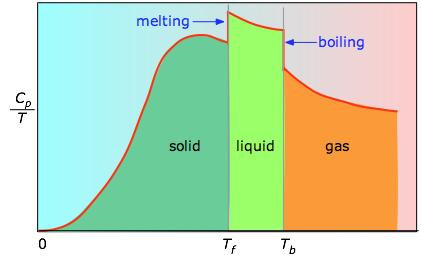

When this is not known, one can take a series of heat capacity measurements over narrow temperature increments \(ΔT\) and measure the area under each section of the curve. The area under each section of the plot represents the entropy change associated with heating the substance through an interval \(ΔT\). To this must be added the enthalpies of melting, vaporization, and of any solid-solid phase changes.

Values of \(C_p\) for temperatures near zero are not measured directly, but can be estimated from quantum theory. The cumulative areas from 0 K to any given temperature (Figure \(\PageIndex{3}\)) are then plotted as a function of \(T\), and any phase-change entropies such as

\[S_{vap} = \dfrac{H_{vap}}{T_b}\]

are added to obtain the absolute entropy at temperature \(T\). As shown in Figure \(\PageIndex{2}\) above, the entropy of a substance increases with temperature, and it does so for two reasons:

- As the temperature rises, more microstates become accessible, allowing thermal energy to be more widely dispersed. This is reflected in the gradual increase of entropy with temperature.

- The molecules of solids, liquids, and gases have increasingly greater freedom to move around, facilitating the spreading and sharing of thermal energy. Phase changes are therefore accompanied by massive and discontinuous increase in the entropy.

Calculating \(\Delta S_{sys}\)

We can make careful calorimetric measurements to determine the temperature dependence of a substance’s entropy and to derive absolute entropy values under specific conditions. Standard molar entropies are given the label \(\overline{S}^o_{298}\) for values determined for one mole of substance at a pressure of 1 bar and a temperature of 298 K. The standard entropy change (\(ΔS^o\)) for any process may be computed from the standard molar entropies of its reactant and product species like the following:

\[ΔS^o=\sum ν\overline{S}^o_{298}(\ce{products})−\sum ν\overline{S}^o_{298}(\ce{reactants}) \label{\(\PageIndex{6}\)}\]

Here, \(ν\) represents stoichiometric coefficients in the balanced equation representing the process. For example, \(ΔS^o\) for the following reaction at room temperature

is computed as the following:

\[ΔS^o=[x\overline{S}^o_{298}(\ce{C})+y\overline{S}^o_{298}(\ce{D})]−[m\overline{S}^o_{298}(\ce{A})+n\overline{S}^o_{298}(\ce{B})] \label{\(\PageIndex{8}\)}\]

Table \(\PageIndex{1}\) lists some standard molar entropies at 298.15 K. You can find additional standard molar entropies in Tables T1 and T2

| Gases | Liquids | Solids | |||

|---|---|---|---|---|---|

| Substance | \(\overline{S}^o\) [J/(mol•K)] | Substance | \(\overline{S}^o\) [J/(mol•K)] | Substance | \(\overline{S}^o\) [J/(mol•K)] |

| He | 126.2 | H2O | 70.0 | C (diamond) | 2.4 |

| H2 | 130.7 | CH3OH | 126.8 | C (graphite) | 5.7 |

| Ne | 146.3 | Br2 | 152.2 | LiF | 35.7 |

| Ar | 154.8 | CH3CH2OH | 160.7 | SiO2 (quartz) | 41.5 |

| Kr | 164.1 | C6H6 | 173.4 | Ca | 41.6 |

| Xe | 169.7 | CH3COCl | 200.8 | Na | 51.3 |

| H2O | 188.8 | C6H12 (cyclohexane) | 204.4 | MgF2 | 57.2 |

| N2 | 191.6 | C8H18 (isooctane) | 329.3 | K | 64.7 |

| O2 | 205.2 | NaCl | 72.1 | ||

| CO2 | 213.8 | KCl | 82.6 | ||

| I2 | 260.7 | I2 | 116.1 | ||

A closer examination of Table \(\PageIndex{1}\) also reveals that substances with similar molecular structures tend to have similar \(\overline{S}^o\) values. Among crystalline materials, those with the lowest entropies tend to be rigid crystals composed of small atoms linked by strong, highly directional bonds, such as diamond (\(\overline{S}^o = 2.4 \,J/(mol•K)\)). In contrast, graphite, the softer, less rigid allotrope of carbon, has a higher \(\overline{S}^o\) (5.7 J/(mol•K)) due to more disorder (microstates) in the crystal. Soft crystalline substances and those with larger atoms tend to have higher entropies because of increased molecular motion and disorder. Similarly, the absolute entropy of a substance tends to increase with increasing molecular complexity because the number of available microstates increases with molecular complexity. For example, compare the \(\overline{S}^o\) values for CH3OH(l) and CH3CH2OH(l). Finally, substances with strong hydrogen bonds have lower values of \(\overline{S}^o\), which reflects a more ordered structure.

Entropy increases with softer, less rigid solids, solids that contain larger atoms, and solids with complex molecular structures.

To calculate \(ΔS^o\) for a chemical reaction from standard molar entropies, we use the familiar “products minus reactants” rule, in which the absolute molar entropy of each reactant and product is multiplied by its stoichiometric coefficient in the balanced chemical equation. Example \(\PageIndex{1}\) illustrates this procedure for the combustion of the liquid hydrocarbon isooctane (\(\ce{C8H18}\); 2,2,4-trimethylpentane).

Summary

Energy values, as you know, are all relative, and must be defined on a scale that is completely arbitrary; there is no such thing as the absolute energy of a substance, so we can arbitrarily define the enthalpy or internal energy of an element in its most stable form at 298 K and 1 atm pressure as zero. The same is not true of the entropy; since entropy is a measure of the “dilution” of thermal energy, it follows that the less thermal energy available to spread through a system (that is, the lower the temperature), the smaller will be its entropy. In other words, as the absolute temperature of a substance approaches zero, so does its entropy. This principle is the basis of the Third law of thermodynamics, which states that the entropy of a perfectly-ordered solid at 0 K is zero.

In practice, chemists determine the absolute entropy of a substance by measuring the molar heat capacity (\(C_p\)) as a function of temperature and then plotting the quantity \(C_p/T\) versus \(T\). The area under the curve between 0 K and any temperature T is the absolute entropy of the substance at \(T\). In contrast, other thermodynamic properties, such as internal energy and enthalpy, can be evaluated in only relative terms, not absolute terms.

The second law of thermodynamics states that a spontaneous process increases the entropy of the universe, ΔSuniv > 0. If ΔSuniv < 0, the process is nonspontaneous, and if ΔSuniv = 0, the system is at equilibrium. The third law of thermodynamics establishes the zero for entropy as that of a perfect, pure crystalline solid at 0 K. With only one possible microstate, the entropy is zero. We may compute the standard entropy change for a process by using standard entropy values for the reactants and products involved in the process.

Contributors

Paul Flowers (University of North Carolina - Pembroke), Klaus Theopold (University of Delaware) and Richard Langley (Stephen F. Austin State University) with contributing authors. Textbook content produced by OpenStax College is licensed under a Creative Commons Attribution License 4.0 license. Download for free at http://cnx.org/contents/85abf193-2bd...a7ac8df6@9.110).

Stephen Lower, Professor Emeritus (Simon Fraser U.) Chem1 Virtual Textbook