19.2: Entropy and the Second Law of Thermodynamics

- Page ID

- 21905

\( \newcommand{\vecs}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vecd}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash {#1}}} \)

\( \newcommand{\id}{\mathrm{id}}\) \( \newcommand{\Span}{\mathrm{span}}\)

( \newcommand{\kernel}{\mathrm{null}\,}\) \( \newcommand{\range}{\mathrm{range}\,}\)

\( \newcommand{\RealPart}{\mathrm{Re}}\) \( \newcommand{\ImaginaryPart}{\mathrm{Im}}\)

\( \newcommand{\Argument}{\mathrm{Arg}}\) \( \newcommand{\norm}[1]{\| #1 \|}\)

\( \newcommand{\inner}[2]{\langle #1, #2 \rangle}\)

\( \newcommand{\Span}{\mathrm{span}}\)

\( \newcommand{\id}{\mathrm{id}}\)

\( \newcommand{\Span}{\mathrm{span}}\)

\( \newcommand{\kernel}{\mathrm{null}\,}\)

\( \newcommand{\range}{\mathrm{range}\,}\)

\( \newcommand{\RealPart}{\mathrm{Re}}\)

\( \newcommand{\ImaginaryPart}{\mathrm{Im}}\)

\( \newcommand{\Argument}{\mathrm{Arg}}\)

\( \newcommand{\norm}[1]{\| #1 \|}\)

\( \newcommand{\inner}[2]{\langle #1, #2 \rangle}\)

\( \newcommand{\Span}{\mathrm{span}}\) \( \newcommand{\AA}{\unicode[.8,0]{x212B}}\)

\( \newcommand{\vectorA}[1]{\vec{#1}} % arrow\)

\( \newcommand{\vectorAt}[1]{\vec{\text{#1}}} % arrow\)

\( \newcommand{\vectorB}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vectorC}[1]{\textbf{#1}} \)

\( \newcommand{\vectorD}[1]{\overrightarrow{#1}} \)

\( \newcommand{\vectorDt}[1]{\overrightarrow{\text{#1}}} \)

\( \newcommand{\vectE}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash{\mathbf {#1}}}} \)

\( \newcommand{\vecs}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vecd}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash {#1}}} \)

\(\newcommand{\avec}{\mathbf a}\) \(\newcommand{\bvec}{\mathbf b}\) \(\newcommand{\cvec}{\mathbf c}\) \(\newcommand{\dvec}{\mathbf d}\) \(\newcommand{\dtil}{\widetilde{\mathbf d}}\) \(\newcommand{\evec}{\mathbf e}\) \(\newcommand{\fvec}{\mathbf f}\) \(\newcommand{\nvec}{\mathbf n}\) \(\newcommand{\pvec}{\mathbf p}\) \(\newcommand{\qvec}{\mathbf q}\) \(\newcommand{\svec}{\mathbf s}\) \(\newcommand{\tvec}{\mathbf t}\) \(\newcommand{\uvec}{\mathbf u}\) \(\newcommand{\vvec}{\mathbf v}\) \(\newcommand{\wvec}{\mathbf w}\) \(\newcommand{\xvec}{\mathbf x}\) \(\newcommand{\yvec}{\mathbf y}\) \(\newcommand{\zvec}{\mathbf z}\) \(\newcommand{\rvec}{\mathbf r}\) \(\newcommand{\mvec}{\mathbf m}\) \(\newcommand{\zerovec}{\mathbf 0}\) \(\newcommand{\onevec}{\mathbf 1}\) \(\newcommand{\real}{\mathbb R}\) \(\newcommand{\twovec}[2]{\left[\begin{array}{r}#1 \\ #2 \end{array}\right]}\) \(\newcommand{\ctwovec}[2]{\left[\begin{array}{c}#1 \\ #2 \end{array}\right]}\) \(\newcommand{\threevec}[3]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \end{array}\right]}\) \(\newcommand{\cthreevec}[3]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \end{array}\right]}\) \(\newcommand{\fourvec}[4]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \\ #4 \end{array}\right]}\) \(\newcommand{\cfourvec}[4]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \\ #4 \end{array}\right]}\) \(\newcommand{\fivevec}[5]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \\ #4 \\ #5 \\ \end{array}\right]}\) \(\newcommand{\cfivevec}[5]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \\ #4 \\ #5 \\ \end{array}\right]}\) \(\newcommand{\mattwo}[4]{\left[\begin{array}{rr}#1 \amp #2 \\ #3 \amp #4 \\ \end{array}\right]}\) \(\newcommand{\laspan}[1]{\text{Span}\{#1\}}\) \(\newcommand{\bcal}{\cal B}\) \(\newcommand{\ccal}{\cal C}\) \(\newcommand{\scal}{\cal S}\) \(\newcommand{\wcal}{\cal W}\) \(\newcommand{\ecal}{\cal E}\) \(\newcommand{\coords}[2]{\left\{#1\right\}_{#2}}\) \(\newcommand{\gray}[1]{\color{gray}{#1}}\) \(\newcommand{\lgray}[1]{\color{lightgray}{#1}}\) \(\newcommand{\rank}{\operatorname{rank}}\) \(\newcommand{\row}{\text{Row}}\) \(\newcommand{\col}{\text{Col}}\) \(\renewcommand{\row}{\text{Row}}\) \(\newcommand{\nul}{\text{Nul}}\) \(\newcommand{\var}{\text{Var}}\) \(\newcommand{\corr}{\text{corr}}\) \(\newcommand{\len}[1]{\left|#1\right|}\) \(\newcommand{\bbar}{\overline{\bvec}}\) \(\newcommand{\bhat}{\widehat{\bvec}}\) \(\newcommand{\bperp}{\bvec^\perp}\) \(\newcommand{\xhat}{\widehat{\xvec}}\) \(\newcommand{\vhat}{\widehat{\vvec}}\) \(\newcommand{\uhat}{\widehat{\uvec}}\) \(\newcommand{\what}{\widehat{\wvec}}\) \(\newcommand{\Sighat}{\widehat{\Sigma}}\) \(\newcommand{\lt}{<}\) \(\newcommand{\gt}{>}\) \(\newcommand{\amp}{&}\) \(\definecolor{fillinmathshade}{gray}{0.9}\)- To understand the relationship between internal energy and entropy.

The first law of thermodynamics governs changes in the state function we have called internal energy (\(U\)). Changes in the internal energy (ΔU) are closely related to changes in the enthalpy (ΔH), which is a measure of the heat flow between a system and its surroundings at constant pressure. You also learned previously that the enthalpy change for a chemical reaction can be calculated using tabulated values of enthalpies of formation. This information, however, does not tell us whether a particular process or reaction will occur spontaneously.

Let’s consider a familiar example of spontaneous change. If a hot frying pan that has just been removed from the stove is allowed to come into contact with a cooler object, such as cold water in a sink, heat will flow from the hotter object to the cooler one, in this case usually releasing steam. Eventually both objects will reach the same temperature, at a value between the initial temperatures of the two objects. This transfer of heat from a hot object to a cooler one obeys the first law of thermodynamics: energy is conserved.

Now consider the same process in reverse. Suppose that a hot frying pan in a sink of cold water were to become hotter while the water became cooler. As long as the same amount of thermal energy was gained by the frying pan and lost by the water, the first law of thermodynamics would be satisfied. Yet we all know that such a process cannot occur: heat always flows from a hot object to a cold one, never in the reverse direction. That is, by itself the magnitude of the heat flow associated with a process does not predict whether the process will occur spontaneously.

For many years, chemists and physicists tried to identify a single measurable quantity that would enable them to predict whether a particular process or reaction would occur spontaneously. Initially, many of them focused on enthalpy changes and hypothesized that an exothermic process would always be spontaneous. But although it is true that many, if not most, spontaneous processes are exothermic, there are also many spontaneous processes that are not exothermic. For example, at a pressure of 1 atm, ice melts spontaneously at temperatures greater than 0°C, yet this is an endothermic process because heat is absorbed. Similarly, many salts (such as NH4NO3, NaCl, and KBr) dissolve spontaneously in water even though they absorb heat from the surroundings as they dissolve (i.e., ΔHsoln > 0). Reactions can also be both spontaneous and highly endothermic, like the reaction of barium hydroxide with ammonium thiocyanate shown in Figure \(\PageIndex{1}\).

Thus enthalpy is not the only factor that determines whether a process is spontaneous. For example, after a cube of sugar has dissolved in a glass of water so that the sucrose molecules are uniformly dispersed in a dilute solution, they never spontaneously come back together in solution to form a sugar cube. Moreover, the molecules of a gas remain evenly distributed throughout the entire volume of a glass bulb and never spontaneously assemble in only one portion of the available volume. To help explain why these phenomena proceed spontaneously in only one direction requires an additional state function called entropy (S), a thermodynamic property of all substances that is proportional to their degree of "disorder". In Chapter 13, we introduced the concept of entropy in relation to solution formation. Here we further explore the nature of this state function and define it mathematically.

Entropy

Chemical and physical changes in a system may be accompanied by either an increase or a decrease in the disorder of the system, corresponding to an increase in entropy (ΔS > 0) or a decrease in entropy (ΔS < 0), respectively. As with any other state function, the change in entropy is defined as the difference between the entropies of the final and initial states: ΔS = Sf − Si.

When a gas expands into a vacuum, its entropy increases because the increased volume allows for greater atomic or molecular disorder. The greater the number of atoms or molecules in the gas, the greater the disorder. The magnitude of the entropy of a system depends on the number of microscopic states, or microstates, associated with it (in this case, the number of atoms or molecules); that is, the greater the number of microstates, the greater the entropy.

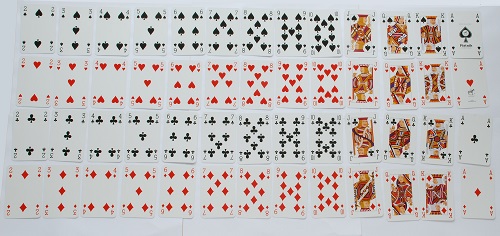

We can illustrate the concepts of microstates and entropy using a deck of playing cards, as shown in Figure \(\PageIndex{2}\). In any new deck, the 52 cards are arranged by four suits, with each suit arranged in descending order. If the cards are shuffled, however, there are approximately 1068 different ways they might be arranged, which corresponds to 1068 different microscopic states. The entropy of an ordered new deck of cards is therefore low, whereas the entropy of a randomly shuffled deck is high. Card games assign a higher value to a hand that has a low degree of disorder. In games such as five-card poker, only 4 of the 2,598,960 different possible hands, or microstates, contain the highly ordered and valued arrangement of cards called a royal flush, almost 1.1 million hands contain one pair, and more than 1.3 million hands are completely disordered and therefore have no value. Because the last two arrangements are far more probable than the first, the value of a poker hand is inversely proportional to its entropy.

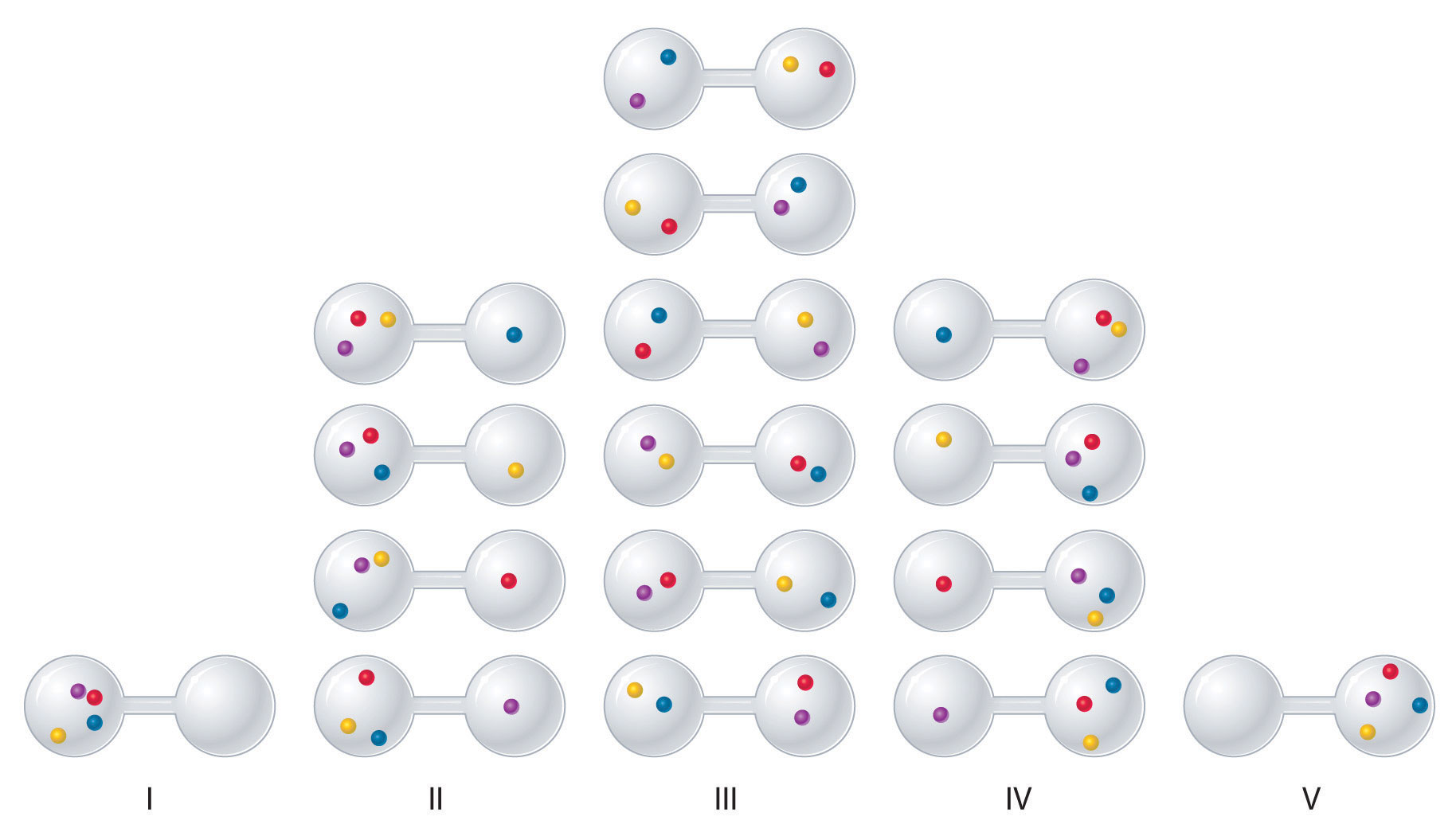

We can see how to calculate these kinds of probabilities for a chemical system by considering the possible arrangements of a sample of four gas molecules in a two-bulb container (Figure \(\PageIndex{3}\)). There are five possible arrangements: all four molecules in the left bulb (I); three molecules in the left bulb and one in the right bulb (II); two molecules in each bulb (III); one molecule in the left bulb and three molecules in the right bulb (IV); and four molecules in the right bulb (V). If we assign a different color to each molecule to keep track of it for this discussion (remember, however, that in reality the molecules are indistinguishable from one another), we can see that there are 16 different ways the four molecules can be distributed in the bulbs, each corresponding to a particular microstate. As shown in Figure \(\PageIndex{3}\), arrangement I is associated with a single microstate, as is arrangement V, so each arrangement has a probability of 1/16. Arrangements II and IV each have a probability of 4/16 because each can exist in four microstates. Similarly, six different microstates can occur as arrangement III, making the probability of this arrangement 6/16. Thus the arrangement that we would expect to encounter, with half the gas molecules in each bulb, is the most probable arrangement. The others are not impossible but simply less likely.

There are 16 different ways to distribute four gas molecules between the bulbs, with each distribution corresponding to a particular microstate. Arrangements I and V each produce a single microstate with a probability of 1/16. This particular arrangement is so improbable that it is likely not observed. Arrangements II and IV each produce four microstates, with a probability of 4/16. Arrangement III, with half the gas molecules in each bulb, has a probability of 6/16. It is the one encompassing the most microstates, so it is the most probable.

Instead of four molecules of gas, let’s now consider 1 L of an ideal gas at standard temperature and pressure (STP), which contains 2.69 × 1022 molecules (6.022 × 1023 molecules/22.4 L). If we allow the sample of gas to expand into a second 1 L container, the probability of finding all 2.69 × 1022 molecules in one container and none in the other at any given time is extremely small, approximately \(\frac{2}{2.69 \times 10^{22}}\). The probability of such an occurrence is effectively zero. Although nothing prevents the molecules in the gas sample from occupying only one of the two bulbs, that particular arrangement is so improbable that it is never actually observed. The probability of arrangements with essentially equal numbers of molecules in each bulb is quite high, however, because there are many equivalent microstates in which the molecules are distributed equally. Hence a macroscopic sample of a gas occupies all of the space available to it, simply because this is the most probable arrangement.

A disordered system has a greater number of possible microstates than does an ordered system, so it has a higher entropy. This is most clearly seen in the entropy changes that accompany phase transitions, such as solid to liquid or liquid to gas. As you know, a crystalline solid is composed of an ordered array of molecules, ions, or atoms that occupy fixed positions in a lattice, whereas the molecules in a liquid are free to move and tumble within the volume of the liquid; molecules in a gas have even more freedom to move than those in a liquid. Each degree of motion increases the number of available microstates, resulting in a higher entropy. Thus the entropy of a system must increase during melting (ΔSfus > 0). Similarly, when a liquid is converted to a vapor, the greater freedom of motion of the molecules in the gas phase means that ΔSvap > 0. Conversely, the reverse processes (condensing a vapor to form a liquid or freezing a liquid to form a solid) must be accompanied by a decrease in the entropy of the system: ΔS < 0.

Entropy (S) is a thermodynamic property of all substances that is proportional to their degree of disorder. The greater the number of possible microstates for a system, the greater the disorder and the higher the entropy.

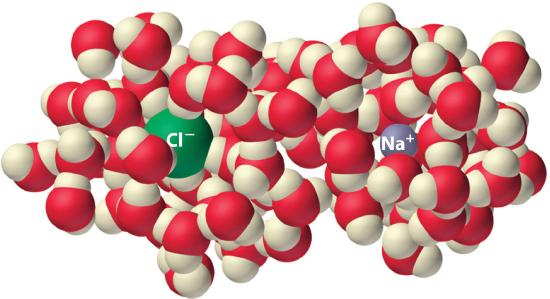

Experiments show that the magnitude of ΔSvap is 80–90 J/(mol•K) for a wide variety of liquids with different boiling points. However, liquids that have highly ordered structures due to hydrogen bonding or other intermolecular interactions tend to have significantly higher values of ΔSvap. For instance, ΔSvap for water is 102 J/(mol•K). Another process that is accompanied by entropy changes is the formation of a solution. As illustrated in Figure \(\PageIndex{4}\), the formation of a liquid solution from a crystalline solid (the solute) and a liquid solvent is expected to result in an increase in the number of available microstates of the system and hence its entropy. Indeed, dissolving a substance such as NaCl in water disrupts both the ordered crystal lattice of NaCl and the ordered hydrogen-bonded structure of water, leading to an increase in the entropy of the system. At the same time, however, each dissolved Na+ ion becomes hydrated by an ordered arrangement of at least six water molecules, and the Cl− ions also cause the water to adopt a particular local structure. Both of these effects increase the order of the system, leading to a decrease in entropy. The overall entropy change for the formation of a solution therefore depends on the relative magnitudes of these opposing factors. In the case of an NaCl solution, disruption of the crystalline NaCl structure and the hydrogen-bonded interactions in water is quantitatively more important, so ΔSsoln > 0.

Dissolving NaCl in water results in an increase in the entropy of the system. Each hydrated ion, however, forms an ordered arrangement with water molecules, which decreases the entropy of the system. The magnitude of the increase is greater than the magnitude of the decrease, so the overall entropy change for the formation of an NaCl solution is positive.

Predict which substance in each pair has the higher entropy and justify your answer.

- 1 mol of NH3(g) or 1 mol of He(g), both at 25°C

- 1 mol of Pb(s) at 25°C or 1 mol of Pb(l) at 800°C

Given: amounts of substances and temperature

Asked for: higher entropy

Strategy:

From the number of atoms present and the phase of each substance, predict which has the greater number of available microstates and hence the higher entropy.

Solution:

- Both substances are gases at 25°C, but one consists of He atoms and the other consists of NH3 molecules. With four atoms instead of one, the NH3 molecules have more motions available, leading to a greater number of microstates. Hence we predict that the NH3 sample will have the higher entropy.

- The nature of the atomic species is the same in both cases, but the phase is different: one sample is a solid, and one is a liquid. Based on the greater freedom of motion available to atoms in a liquid, we predict that the liquid sample will have the higher entropy.

Predict which substance in each pair has the higher entropy and justify your answer.

- 1 mol of He(g) at 10 K and 1 atm pressure or 1 mol of He(g) at 250°C and 0.2 atm

- a mixture of 3 mol of H2(g) and 1 mol of N2(g) at 25°C and 1 atm or a sample of 2 mol of NH3(g) at 25°C and 1 atm

- Answer a

-

1 mol of He(g) at 250°C and 0.2 atm (higher temperature and lower pressure indicate greater volume and more microstates)

- Answer a

-

a mixture of 3 mol of H2(g) and 1 mol of N2(g) at 25°C and 1 atm (more molecules of gas are present)

Video Solution

Reversible and Irreversible Changes

Changes in entropy (ΔS), together with changes in enthalpy (ΔH), enable us to predict in which direction a chemical or physical change will occur spontaneously. Before discussing how to do so, however, we must understand the difference between a reversible process and an irreversible one. In a reversible process, every intermediate state between the extremes is an equilibrium state, regardless of the direction of the change. In contrast, an irreversible process is one in which the intermediate states are not equilibrium states, so change occurs spontaneously in only one direction. As a result, a reversible process can change direction at any time, whereas an irreversible process cannot. When a gas expands reversibly against an external pressure such as a piston, for example, the expansion can be reversed at any time by reversing the motion of the piston; once the gas is compressed, it can be allowed to expand again, and the process can continue indefinitely. In contrast, the expansion of a gas into a vacuum (Pext = 0) is irreversible because the external pressure is measurably less than the internal pressure of the gas. No equilibrium states exist, and the gas expands irreversibly. When gas escapes from a microscopic hole in a balloon into a vacuum, for example, the process is irreversible; the direction of airflow cannot change.

Because work done during the expansion of a gas depends on the opposing external pressure (w = - PextΔV), work done in a reversible process is always equal to or greater than work done in a corresponding irreversible process: wrev ≥ wirrev. Whether a process is reversible or irreversible, ΔU = q + w. Because U is a state function, the magnitude of ΔU does not depend on reversibility and is independent of the path taken. So

\[ΔU = q_{rev} + w_{rev} = q_{irrev} + w_{irrev} \label{Eq1} \]

Work done in a reversible process is always equal to or greater than work done in a corresponding irreversible process: wrev ≥ wirrev.

In other words, ΔU for a process is the same whether that process is carried out in a reversible manner or an irreversible one. We now return to our earlier definition of entropy, using the magnitude of the heat flow for a reversible process (qrev) to define entropy quantitatively.

The Relationship between Internal Energy and Entropy

Because the quantity of heat transferred (qrev) is directly proportional to the absolute temperature of an object (T) (qrev ∝ T), the hotter the object, the greater the amount of heat transferred. Moreover, adding heat to a system increases the kinetic energy of the component atoms and molecules and hence their disorder (ΔS ∝ qrev). Combining these relationships for any reversible process,

\[q_{\textrm{rev}}=T\Delta S\;\textrm{ and }\;\Delta S=\dfrac{q_{\textrm{rev}}}{T} \label{Eq2} \]

Because the numerator (qrev) is expressed in units of energy (joules), the units of ΔS are joules/kelvin (J/K). Recognizing that the work done in a reversible process at constant pressure is wrev = −PΔV, we can express Equation \(\ref{Eq1}\) as follows:

\[ \begin{align} ΔU &= q_{rev} + w_{rev} \\[4pt] &= TΔS − PΔV \label{Eq3} \end{align} \]

Thus the change in the internal energy of the system is related to the change in entropy, the absolute temperature, and the \(PV\) work done.

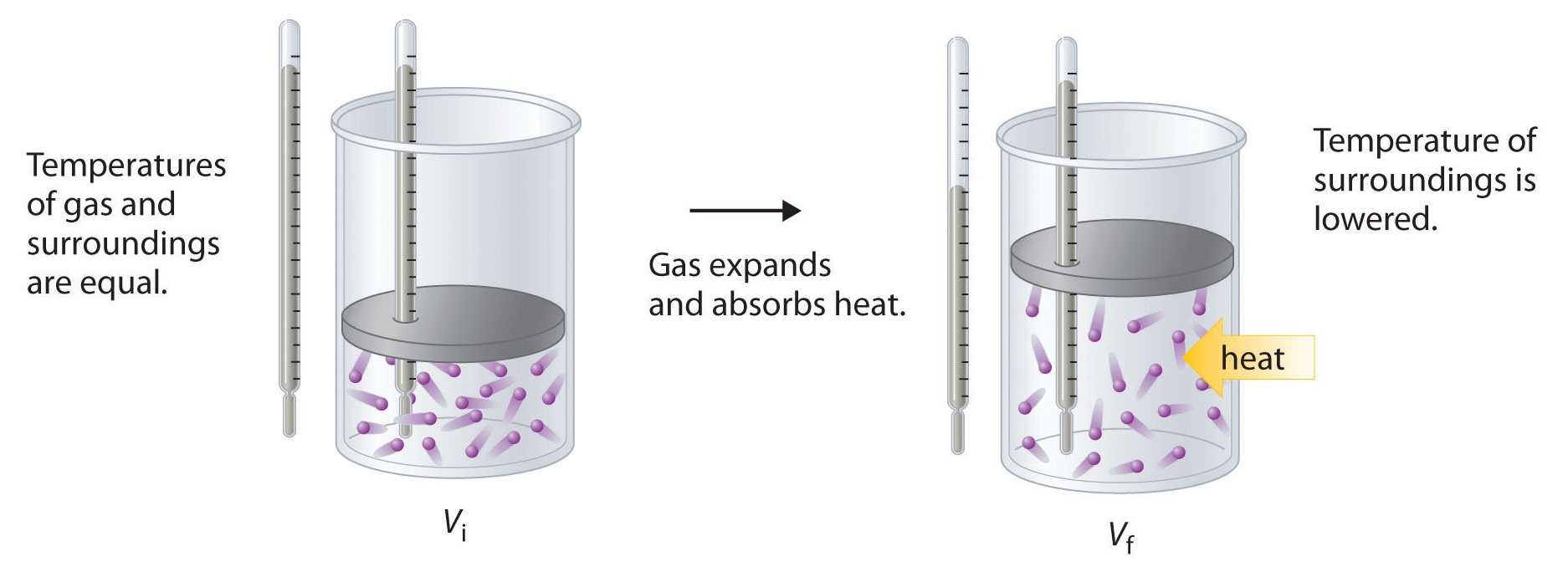

To illustrate the use of Equation \(\ref{Eq2}\) and Equation \(\ref{Eq3}\), we consider two reversible processes before turning to an irreversible process. When a sample of an ideal gas is allowed to expand reversibly at constant temperature, heat must be added to the gas during expansion to keep its \(T\) constant (Figure \(\PageIndex{5}\)). The internal energy of the gas does not change because the temperature of the gas does not change; that is, \(ΔU = 0\) and \(q_{rev} = −w_{rev}\). During expansion, ΔV > 0, so the gas performs work on its surroundings:

\[w_{rev} = −PΔV < 0. \nonumber \]

According to Equation \(\ref{Eq3}\), this means that qrev must increase during expansion; that is, the gas must absorb heat from the surroundings during expansion, and the surroundings must give up that same amount of heat. The entropy change of the system is therefore ΔSsys = +qrev/T, and the entropy change of the surroundings is

\[ΔS_{surr} = −\dfrac{q_{rev}}{T}. \nonumber \]

The corresponding change in entropy of the universe is then as follows:

\[ \begin{align*} \Delta S_{\textrm{univ}} &=\Delta S_{\textrm{sys}}+\Delta S_{\textrm{surr}} \\[4pt] &= \dfrac{q_{\textrm{rev}}}{T}+\left(-\dfrac{q_\textrm{rev}}{T}\right) \\[4pt] &= 0 \label{Eq4} \end{align*} \]

Thus no change in ΔSuniv has occurred.

In the initial state (top), the temperatures of a gas and the surroundings are the same. During the reversible expansion of the gas, heat must be added to the gas to maintain a constant temperature. Thus the internal energy of the gas does not change, but work is performed on the surroundings. In the final state (bottom), the temperature of the surroundings is lower because the gas has absorbed heat from the surroundings during expansion.

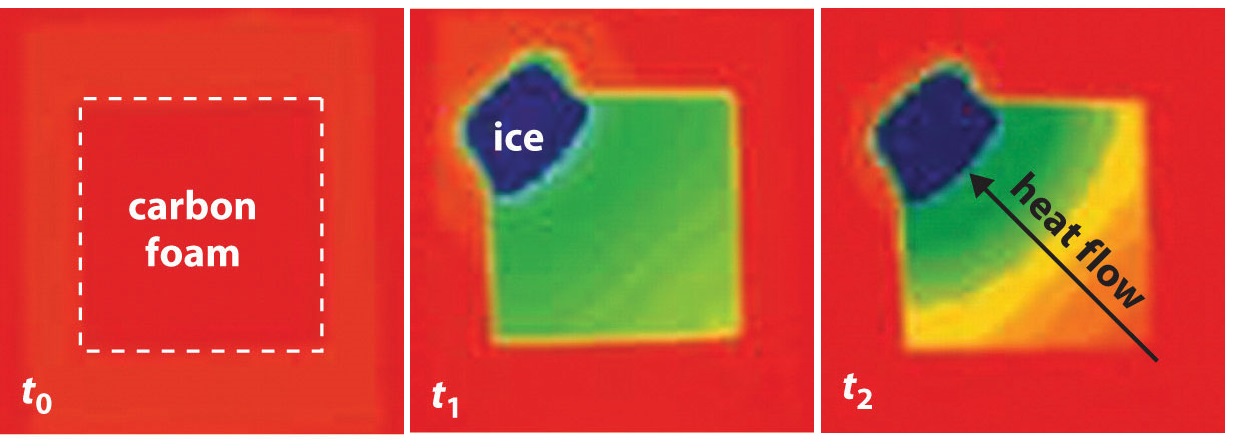

Now consider the reversible melting of a sample of ice at 0°C and 1 atm. The enthalpy of fusion of ice is 6.01 kJ/mol, which means that 6.01 kJ of heat are absorbed reversibly from the surroundings when 1 mol of ice melts at 0°C, as illustrated in Figure \(\PageIndex{6}\). The surroundings constitute a sample of low-density carbon foam that is thermally conductive, and the system is the ice cube that has been placed on it. The direction of heat flow along the resulting temperature gradient is indicated with an arrow. From Equation \(\ref{Eq2}\), we see that the entropy of fusion of ice can be written as follows:

By convention, a thermogram shows cold regions in blue, warm regions in red, and thermally intermediate regions in green. When an ice cube (the system, dark blue) is placed on the corner of a square sample of low-density carbon foam with very high thermal conductivity, the temperature of the foam is lowered (going from red to green). As the ice melts, a temperature gradient appears, ranging from warm to very cold. An arrow indicates the direction of heat flow from the surroundings (red and green) to the ice cube. The amount of heat lost by the surroundings is the same as the amount gained by the ice, so the entropy of the universe does not change.

In this case, ΔSfus = (6.01 kJ/mol)/(273 K) = 22.0 J/(mol•K) = ΔSsys. The amount of heat lost by the surroundings is the same as the amount gained by the ice, so ΔSsurr = qrev/T = −(6.01 kJ/mol)/(273 K) = −22.0 J/(mol•K). Once again, we see that the entropy of the universe does not change:

ΔSuniv = ΔSsys + ΔSsurr = 22.0 J/(mol•K) − 22.0 J/(mol•K) = 0

In these two examples of reversible processes, the entropy of the universe is unchanged. This is true of all reversible processes and constitutes part of the second law of thermodynamics: the entropy of the universe remains constant in a reversible process, whereas the entropy of the universe increases in an irreversible (spontaneous) process.

The entropy of the universe increases during a spontaneous process. It also increases during an observable non-spontaneous process.

As an example of an irreversible process, consider the entropy changes that accompany the spontaneous and irreversible transfer of heat from a hot object to a cold one, as occurs when lava spewed from a volcano flows into cold ocean water. The cold substance, the water, gains heat (q > 0), so the change in the entropy of the water can be written as ΔScold = q/Tcold. Similarly, the hot substance, the lava, loses heat (q < 0), so its entropy change can be written as ΔShot = −q/Thot, where Tcold and Thot are the temperatures of the cold and hot substances, respectively. The total entropy change of the universe accompanying this process is therefore

\[\Delta S_{\textrm{univ}}=\Delta S_{\textrm{cold}}+\Delta S_{\textrm{hot}}=\dfrac{q}{T_{\textrm{cold}}}+\left(-\dfrac{q}{T_{\textrm{hot}}}\right) \label{Eq6} \]

The numerators on the right side of Equation \(\ref{Eq6}\) are the same in magnitude but opposite in sign. Whether ΔSuniv is positive or negative depends on the relative magnitudes of the denominators. By definition, Thot > Tcold, so −q/Thot must be less than q/Tcold, and ΔSuniv must be positive. As predicted by the second law of thermodynamics, the entropy of the universe increases during this irreversible process. Any process for which ΔSuniv is positive is, by definition, a spontaneous one that will occur as written. Conversely, any process for which ΔSuniv is negative will not occur as written but will occur spontaneously in the reverse direction. We see, therefore, that heat is spontaneously transferred from a hot substance, the lava, to a cold substance, the ocean water. In fact, if the lava is hot enough (e.g., if it is molten), so much heat can be transferred that the water is converted to steam (Figure \(\PageIndex{7}\)).

Tin has two allotropes with different structures. Gray tin (α-tin) has a structure similar to that of diamond, whereas white tin (β-tin) is denser, with a unit cell structure that is based on a rectangular prism. At temperatures greater than 13.2°C, white tin is the more stable phase, but below that temperature, it slowly converts reversibly to the less dense, powdery gray phase. This phenomenon was argued to have plagued Napoleon’s army during his ill-fated invasion of Russia in 1812: the buttons on his soldiers’ uniforms were made of tin and may have disintegrated during the Russian winter, adversely affecting the soldiers’ health (and morale). The conversion of white tin to gray tin is exothermic, with ΔH = −2.1 kJ/mol at 13.2°C.

- What is ΔS for this process?

- Which is the more highly ordered form of tin—white or gray?

Given: ΔH and temperature

Asked for: ΔS and relative degree of order

Strategy:

Use Equation \(\ref{Eq2}\) to calculate the change in entropy for the reversible phase transition. From the calculated value of ΔS, predict which allotrope has the more highly ordered structure.

Solution

- We know from Equation \(\ref{Eq2}\) that the entropy change for any reversible process is the heat transferred (in joules) divided by the temperature at which the process occurs. Because the conversion occurs at constant pressure, and ΔH and ΔU are essentially equal for reactions that involve only solids, we can calculate the change in entropy for the reversible phase transition where qrev = ΔH. Substituting the given values for ΔH and temperature in kelvins (in this case, T = 13.2°C = 286.4 K),

- The fact that ΔS < 0 means that entropy decreases when white tin is converted to gray tin. Thus gray tin must be the more highly ordered structure.

Note: Whether failing buttons were indeed a contributing factor in the failure of the invasion remains disputed; critics of the theory point out that the tin used would have been quite impure and thus more tolerant of low temperatures. Laboratory tests provide evidence that the time required for unalloyed tin to develop significant tin pest damage at lowered temperatures is about 18 months, which is more than twice the length of Napoleon's Russian campaign. It is clear though that some of the regiments employed in the campaign had tin buttons and that the temperature reached sufficiently low values (at least -40 °C)

Elemental sulfur exists in two forms: an orthorhombic form (Sα), which is stable below 95.3°C, and a monoclinic form (Sβ), which is stable above 95.3°C. The conversion of orthorhombic sulfur to monoclinic sulfur is endothermic, with ΔH = 0.401 kJ/mol at 1 atm.

- What is ΔS for this process?

- Which is the more highly ordered form of sulfur—Sα or Sβ?

- Answer a

-

1.09 J/(mol•K)

- Answer b

-

Sα

Entropy: Entropy(opens in new window) [youtu.be]

Summary

For a given system, the greater the number of microstates, the higher the entropy. During a spontaneous process, the entropy of the universe increases. \[\Delta S=\frac{q_{\textrm{rev}}}{T} \nonumber \]

A measure of the disorder of a system is its entropy (S), a state function whose value increases with an increase in the number of available microstates. A reversible process is one for which all intermediate states between extremes are equilibrium states; it can change direction at any time. In contrast, an irreversible process occurs in one direction only. The change in entropy of the system or the surroundings is the quantity of heat transferred divided by the temperature. The second law of thermodynamics states that in a reversible process, the entropy of the universe is constant, whereas in an irreversible process, such as the transfer of heat from a hot object to a cold object, the entropy of the universe increases.